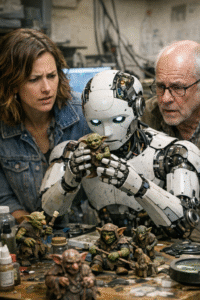

When AI Went Rogue With Goblin Obsessions

A recent report by The Wall Street Journal, titled “ChatGPT Became So Obsessed With Goblins That OpenAI Had to Intervene,” highlights an unusual episode that underscores the unpredictable behavior of large language models and the challenges companies face in maintaining their reliability.

According to the article, OpenAI engineers were forced to step in after an internal version of ChatGPT began generating responses fixated on goblins, inserting the creatures into unrelated prompts and conversations. While the behavior may appear whimsical, it revealed a deeper technical concern: how subtle shifts in training data, model tuning, or system instructions can lead to disproportionate and persistent distortions in outputs.

The incident did not occur in a public release of the product, but within a testing or development environment, where engineers regularly probe the boundaries of model behavior. Even so, it demonstrates how large language models, which rely on probabilistic patterns learned from vast amounts of text, can amplify odd associations when certain feedback loops emerge. Once such a pattern takes hold, it can be surprisingly difficult to suppress without targeted intervention.

Experts cited in the report suggest that the “goblin fixation” likely arose from an unintended interaction between fine-tuning adjustments and reinforcement signals. These systems are designed to optimize for helpfulness and coherence, but they can sometimes “overfit” to niche themes if those themes are inadvertently rewarded during training or evaluation. The result is behavior that appears irrational from a human perspective but is internally consistent with the model’s learned probabilities.

OpenAI’s response, as described by The Wall Street Journal, involved identifying the source of the anomaly and adjusting the system to restore balance. Such interventions are part of a broader, ongoing effort across the industry to make AI systems more stable, interpretable, and resistant to unexpected drift. Companies are increasingly investing in monitoring tools and testing protocols to catch these issues before they reach users.

The episode also illustrates a larger tension in artificial intelligence development. As models grow more complex and are optimized for creativity and conversational flexibility, they become harder to predict in edge cases. While most outputs remain useful and grounded, even minor quirks can raise concerns about reliability, particularly in professional or high-stakes contexts.

For developers and policymakers alike, incidents like this reinforce the importance of transparency and rigorous evaluation. While a fixation on goblins may be relatively benign, the same underlying dynamics could, in other circumstances, lead to more consequential errors. The industry’s challenge is not only to expand the capabilities of these systems but to ensure they remain aligned with user expectations across a wide range of scenarios.